QUAMTUM AND NEUROMORPHIC COMPUTING ON FPGA

The Neuromorphic Computing Unit carries out most of its work in the field of engineering using Field-Programmable Gate Arrays (FPGAs). FPGAs are highly specialized chips whose main distinction lies in containing reconfigurable digital circuits. This means that a programmed FPGA physically implements a digital circuit defined by the programmer.

These devices enable us to harness the advantages of neuromorphic architectures, as their benefits rely on producing specific digital circuits capable of performing event-based computing, for example. While this type of computing is not widely used in the traditional chip manufacturing industry, it is essential for exploiting the capabilities of neuromorphic systems.

In addition, FPGAs are used in numerous applications where no other type of chip can compete, such as software-defined radios, signal processing, or sensor fusion. Programming FPGAs is a highly specialized task that requires knowledge of specific programming languages, FPGA vendor tools, and unique debugging methodologies.

The Quantum Computing research group at ITCL is dedicated to the development of quantum and quantum-inspired algorithms for industrial use cases. Its main goal is to accelerate and improve computations, data science, and physical system simulations through novel computing paradigms.

One of the main application areas is combinatorial optimization using quantum techniques such as the Quantum Approximate Optimization Algorithm (QAOA) and Quantum Annealing, as well as quantum-inspired approaches like logical tensor networks (e.g., MeLoCoToN). The group also specializes in machine learning techniques using quantum computing and tensor compression, including their application to large language models (LLMs). Additionally, research includes data analysis and processing through quantum and tensorial methods, focusing on anomaly detection and information compression.

ITCL operates a Quantum Computing Laboratory, in collaboration with the University of Burgos, dedicated to developing new quantum technologies. The center also includes a quantum circuit simulator and is currently developing a 34-qubit simulator secured with post-quantum cryptography. This infrastructure will enable deep research in quantum algorithm design and tensor network optimization.

OUR NEUROMORPHIC COMPUTING TEAM

The team includes experts in engineering, computer science, automation, and design, along with professionals specializing in technology transfer.

Erik Skibinsky Gitlin

FPGA Development Lead

PhD in Physics from the University of Granada with four years of experience in FPGA development. Currently leads the Neuromorphic and FPGA Computing group, focusing on the design and optimization of advanced architectures for neuromorphic computing, post-quantum encryption, and signal processing. His work spans from implementing neural models on FPGAs to exploring new strategies to enhance computational efficiency in neuromorphic systems. He is skilled in VHDL, HLS, embedded Linux, C/C++, and Python.

OUR QUANTUM COMPUTING TEAM

This area is composed of highly qualified researchers dedicated to continuous innovation and development.

Alejandro Mata Ali

Coordinator of the Quantum Computing Unit

Bachelor’s degree in Physics and Master’s in Nuclear and Particle Physics. Co-author of scientific publications in the fields of tensor networks, digital quantum computing, and quantum combinatorial optimization.

Specialist in tensor networks and digital quantum computing, with experience in designing and implementing new tensorial and quantum algorithms. His main research areas include combinatorial optimization, artificial intelligence, quantum cybersecurity, and anomaly detection.

NEUROMORPHIC COMPUTING CAPABILITIES

High-Level Synthesis (HLS) Design for Rapid FPGA Prototyping

HLS methodology enables the design of FPGA or Application-Specific Integrated Circuit (ASIC) systems using a C/C++ description, typically accompanied by a testbench. One key advantage of HLS is that designs can be compiled like any other program, making debugging easier than with traditional Hardware Description Languages (HDLs). Another benefit is the reduction of errors: HLS ensures that, once the design is simulated and the desired results are achieved, there are no edge cases with incorrect behavior.

Traditional FPGA Design (HDL) with VHDL

While HLS is useful for algorithmic designs (e.g., sequential mathematical computations), it becomes less effective for highly complex or non-linear systems. In such cases, the classic FPGA/ASIC design flow using VHDL is more appropriate. VHDL is the preferred hardware description language for institutions like the European Space Agency and is the most widely used in Europe.

Debugging with Modern Techniques (VUnit, cocotb, VSCode, etc.)

HDL-based development has traditionally been tied to vendor-specific tools, often creating inefficiencies in the industry—such as outdated development environments, limited integration with high-level languages like Python, or high costs in multidisciplinary projects (hardware + driver + PCB development).

These challenges have led to the emergence of open-source initiatives to streamline workflows. At ITCL, we stay continuously up to date to remain at the forefront of these technologies

Continuous Integration

Continuous Integration (CI) refers to the use of automated, high-quality testing procedures that run with each code change. CI ensures that newly introduced code is error-free and does not break existing functionality.

Embedded Linux and Bare-Metal Development

FPGA designs are rarely standalone. Typically, they need to interface with a complete computing system. This requires deep architectural knowledge and the ability to write software that connects the FPGA design to the target application. Two approaches are used: bare-metal, with no operating system, and embedded Linux, where the design runs within a Linux OS. The team is experienced in both, selecting the most suitable option depending on the project’s needs.

QUANTUM COMPUTING CAPABILITIES

Solving Systems of Linear Equations

Quantum algorithms such as HHL (Harrow-Hassidim-Lloyd) and Variational Quantum Linear Solver (VQLS) efficiently solve systems of linear equations. These problems are foundational across data processing and physical system simulations, where extracting properties of solutions or feeding them into other algorithms is essential.

Combinatorial Optimization

A key productivity driver in industry, combinatorial optimization includes problems like warehouse storage optimization or delivery route planning. Quantum approaches such as QAOA (Quantum Approximate Optimization Algorithm) and Quantum Annealing offer approximate methods that may outperform classical algorithms in finding optimal or near-optimal solutions.

Quantum Machine Learning (QML)

QML has seen rapid growth in recent years, introducing novel algorithms, models, and tools. Quantum systems can work within exponentially large data spaces, offering new opportunities to handle and extract insights from large-scale data more efficiently and effectively.

Tensor Networks

A quantum-inspired technique with growing popularity, tensor networks offer efficient information compression and operation via tensor structures. ITCL has extensive experience in this area, applying tensor networks to simulate quantum systems, extract dataset properties, and optimize artificial intelligence models by reducing computational complexity.

RESEARCH PROJECTS

INMERBOT – Immersive Robotic Inspection Tech

INMERBOT is an R&D project with a clear scope: To advance knowledge of teleoperation and management of multi-robotic systems in highly immersive environments for inspection and maintenance applications.

Duration: 2021-2024

Cardhin – Dynamic Inductive Charging for EVs

Development of an electric vehicle recharging system using hydrogen based on renewable sources.

Duration: 2020-2023

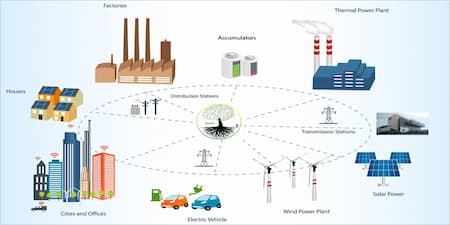

Brain EN – Energy Efficient Microgrid Technologies

Brain EN Consortium is committed to 21st century energy aimed at distributed generation, integrated in microgrids with own generation and self-consumption, with inherent characteristics of sustainability, security, flexibility, cleanliness and efficiency, which must be managed and coordinated with the general grid by means of new intelligent algorithms.

Duration: 2020 - 2023

TRIAGE SMART DECISION – AI Based COVID Triage System for Hospitals

Carrying out prior triage in hospital emergency services, through artificial intelligence techniques, carrying out the first screening and differentiation of COVID or non-COVID users.

Duration: 2021 - 2023

FitDrive project kick-off meeting

The objective of FITDrive is to reduce traffic accidents by early identification of driving impairments, focusing on professional drivers

IBERUS – Biomedical Tech for NMS Degeneratice Pathologies

IBERUS is the name of the Network of Excellence of the 4 Technology Centers (CCTT) that have defined a Strategic Program with the aim of stimulating the Cervera 15 Priority Technology, framed among the technologies for health, both in the research and development activities of the centers themselves and in the business and clinical context

Duration: 2021 - 2023